We used the PlumbR package ( https://github.com/trestletech/plumber ) just to get a Proof-Of-Concept up, but now that we want to get ready for higher loads, we needed something scalable.

So - we checked whether Azure ML was a feasable option - and it looked very promising. So we ported our R code into an 'Execute R script' module in Azure ML, hooked up the data sources ( Azure SQL DB ) and published a web-service.

Then we started testing this webservice, first through R, and got some unexpected results:

Request Id de01a645-4a3b-4ba8-827d-758f7e2ca0b5 took 2.43389391899109 seconds

Request Id fe6383ae-f2a7-467e-a580-0e215d9c79df took 23.6202960014343 seconds

Request Id 9895fb95-e261-4518-a062-276c6f9d3d11 took 2.31389212608337 seconds

Request Id 1d15ccc0-6d3d-414c-9320-c737ee0976b8 took 27.6219758987427 seconds

Request Id a8bf5942-8640-4b25-97df-ce7960109ec2 took 23.4478011131287 seconds

Request Id 88b8fd54-fe31-4261-a601-d57f13babed8 took 18.3553009033203 seconds

Request Id cde2311b-fa53-441e-a099-6bfcbd66860e took 2.30307912826538 seconds

Request Id a0a00ece-1b39-4c24-a636-f43bf9566a25 took 24.1078689098358 seconds

Admittedly, we were aiming to get a sub-second response but would settle for 2 seconds if at least the solution was now scalable and leveraging PaaS. The 23 seconds was way too much though, and we had hard time figuring out where that came from. So - we decided to scale out the webservice - to see whether it would run faster.

Now, the strange thing was - the average response time went up! That was against all our expectation. Thanks to the chat button in Azure ML I got in contact with some of the guys over at Microsoft and they helped me out.

What happens is that if you set the slider to the '5 concurrent calls', 5 environments will be provisioned for you in the background. However - these need a bit of a warmup time on the first call. ( approx 20 seconds in our setup ). So - in the request mentioned above - the first call hit a 'warm' environment, the second call a 'cold' environment and son on.

If you fire sequential calls ( the calls from R ), there is no way of telling whether you will hit a pre-warmed-up environment or not. That's why the response times differ so much. However - once everything is warmed up - response times will be consistent at approx 2 seconds.

Now - if you scale up your environment - you will have to sit out the warmup time 200 times - if you do enough times. That means that your average response time goes up! However - once they're all warmed up - you can have 2 second response times for 200 concurrent calls consistently.

So that explains why we were confused with our initial tests.

Now - another thing to keep in mind is that if your Azure ML service consumes a data source - that data source needs to be able to deal with the higher loads too. We're talking to an Azure SQL database and we had 2 second response times on 20 concurrent calls - but 7 seconds on 50 concurrent calls. After scaling up our SQL Azure DTUs - that response time went back down to 2 seconds, and all was good again.

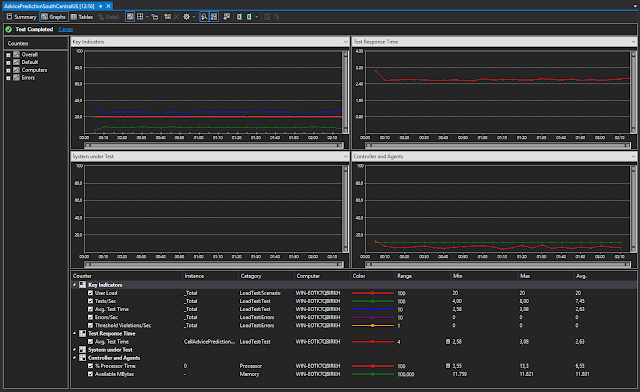

Let me share some screenshots - simple VS load test on 20 concurrent calls:

I think the containers were already pretty much warmed up - because the performance is around 2.5 seconds fromt he get-go.

If you raise the consistent load to 25 - whereas your service is set to 20 - you'll get exceptions:

This is something you want to keep in mind - and probably implement a retry strategy somehwere.

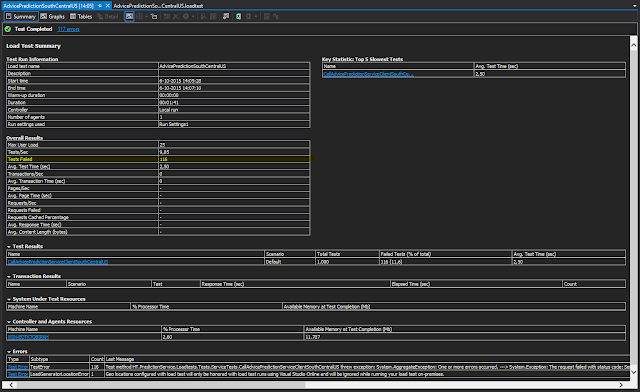

Now scaling out to 50 concurrent calls:

Every peak is a load test that we ran. If you zoom in on the peak - it turns out that the DB was running at around 90% of the DTU capacity. So - we scaled it up to 50:

Re-ran the tests - and presto:

Test response times even went under 2 seconds. In other words - the DB was a bit of a bottleneck in the first tests too.

Anyway - last friday we switched from the PlumbR to Azure ML and all our predictions should now be able to scale out nicely.

Big thanks to Yoav Helfman, Akshaya Annavajhala and Pavel Dournov over at Microsoft for helping me out discovering this.

No comments:

Post a Comment